Notes From Someone Who Touched Money Too Early

Most founders encounter capital late.

They graduate.

They build something.

Then eventually they raise money.

My experience with capital started much earlier, and in a much stranger way.

I was in class 10.

No startup.

No product.

Just an Instagram page, curiosity, and a habit of talking to people on the internet.

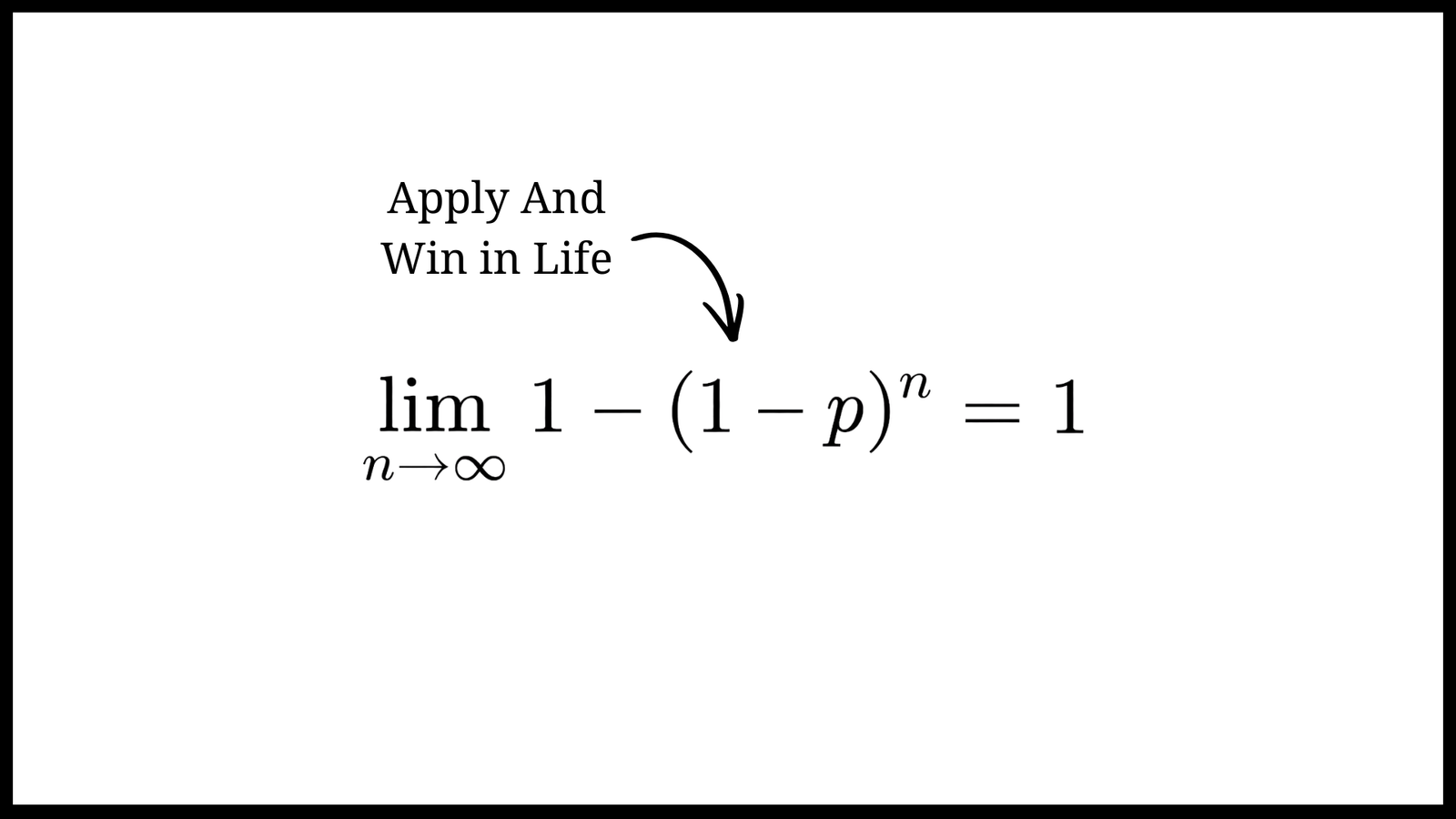

Networking, even before I knew the word for it, was the thing that opened most doors in my life.

One day a friend from Instagram asked me to design a logo for his page called “Billionaire’s Academy.”

That was my first paid project.

Five dollars.

I had never used Canva professionally before. I made a simple design, sent it to him, he liked the second version, and then he sent the money.

There was only one problem.

I was a minor.

I didn’t even have a bank account.

The money came into my father’s account.

A few days later he gave me another project: build a website. I charged around $70–80. I never fully delivered that project, but he still paid me.

I remember spending that money on clothes for my parents and my sisters.

For someone who had never earned before, that feeling was unreal.

That was my first encounter with capital.

Not venture capital.

Just the simple realization that ideas and effort could turn into money.

The Pattern I Later Noticed

Looking back, my life with money has followed a strange pattern.

I make some money.

Then everything goes to zero.

Then I make more money than I had ever seen before.

Then it collapses again.

This cycle has repeated many times.

When I was still in school, I made a few hundred rupees doing small work online. Then for six to eight months, nothing. Completely broke.

Later I started a small agency. Made a few thousand rupees.

Then again things slowed down.

Then a family friend funded one of my experiments. I spent most of that money trying different ideas. When the money ran out, I had to shut everything down and return from Delhi back to Patna.

Then I started again.

I worked with someone briefly and made around ₹20–30k.

Then I built a solo project that suddenly made tens of lakhs in revenue.

I thought I had figured everything out.

Then that collapsed too.

Then another opportunity came.

Then again experiments.

Then again near bankruptcy.

Then again another chance.

Grants. Incubation. New projects.

Over time I realized something about myself.

I am not a conservative operator.

I make money, and then I spend most of it on experiments.

Sometimes 80% of what I earn goes back into research, ideas, and risky bets.

It’s irrational.

But it’s also how I learn.

My Relationship With Money

I grew up in a low middle class family.

Money mattered.

But something interesting happened when I started earning.

My parents never treated my money as theirs.

They never controlled it.

They allowed me to make mistakes with it.

That freedom shaped how I think about capital.

Very early I saw a quote circulating online — a Bill Gates quote, though I don’t know if he ever actually said it:

People who earn hundreds don’t think about spending tens.

That line stuck with me.

Instead of obsessing about saving every rupee, I became obsessed with increasing the size of the game.

Make more.

Build bigger things.

Take bigger risks.

The downside of this mindset is obvious.

I became an extravagant spender when it came to experiments.

But the upside is that I never developed fear around capital.

Money became a tool for exploration, not something sacred.

The Hard Lesson About Investors

One of the most complicated parts of my journey was working closely with investors and collaborators on multiple projects.

We built a lot of things.

Clubs.

AI tools.

Startup programs.

Research experiments.

Some of them worked. Many didn’t.

But the biggest lesson wasn’t about technology or business models.

It was about power dynamics around capital.

When someone invests money in you, even in exchange for equity, a subtle psychological shift often happens.

They begin to feel like they own the company.

And sometimes they start treating the founder as an operator rather than the builder.

This dynamic can become dangerous if you let it happen slowly over time.

Long conversations before investment can also create another problem: familiarity.

If investors spend too much time around you before investing, they sometimes start to see themselves as your manager rather than your partner.

That is not a healthy relationship.

A founder is not an employee.

Even when someone invests in your company.

They are buying equity.

They are not buying you.

This distinction matters more than most young founders realize.

What Most Young Founders Misunderstand About Capital

Many young founders believe raising money is validation.

It isn’t.

Raising money simply means someone believes you might succeed.

That’s it.

Investors don’t fund the “best ideas.”

They fund the most probable winners.

That probability can come from many things:

- elite universities

- strong networks

- previous successes

- market momentum

- or simply pattern recognition

In India, if you are from a top-tier institution, doors open faster.

In the US, if you are from Stanford or MIT, credibility comes almost automatically.

This is uncomfortable to admit, but it is the reality of venture capital.

VCs are not purely meritocratic systems.

They are probability machines.

Seeing Both Sides Of Capital

I have been on both sides.

As a founder needing capital.

And as someone responsible for allocating capital.

When you are the person giving money, something strange happens psychologically.

You start to feel slightly superior.

Even if logically you know the founder is the one building the company.

Many investors start believing they know more than the founders.

Often they don’t.

Especially in technical fields.

Today with AI tools like Claude, Gemini, and Copilot, many investors try coding small demos and start believing they understand the product deeply.

They don’t.

Building real systems is very different from generating prototypes.

But founders also make a mistake.

If your moat is only technology, you will eventually get crushed.

Technology is getting commoditized very fast.

Your real moat must exist somewhere deeper: distribution, insight, systems, or execution speed.

My Personal Rule With Capital

I am still learning this.

But if I could give one rule to younger founders, it would be this:

Don’t raise money before reality validates your idea.

Reality means:

- users

- traction

- real usage

- ideally revenue

Today it is easier than ever to build.

You can ship products quickly.

You can test ideas with real users.

You can distribute through content and community.

Use those tools first.

Let traction attract capital.

Don’t chase investors too early.

A Strange Analogy I Sometimes Use

Venture capital behaves a bit like social attention.

If you chase it desperately, it runs away.

But if you focus on becoming interesting — building things, solving real problems, shipping constantly — attention eventually comes to you.

Investors are the same.

Build something real.

And eventually they start showing up.

Final Thought

My journey with capital has never been stable.

It has been chaotic.

Money comes.

Money disappears.

Experiments succeed.

Experiments fail.

But one thing has remained constant.

Every time I lost money, I gained better questions.

And in the long run, good questions are worth far more than early capital.